The technological, political, and social advances of the last century would be unfathomable to previous generations. One of the great consequences of this advancement has been the exponential increase in accessibility to knowledge. Yet, this accessibility has been accompanied with bitter resistance to accepting what are largely considered to be scientific facts.

For example, a 2018 poll revealed that 5 per cent of Americans had doubts that the Earth was spherical, and 2 per cent were firmly convinced the earth was actually flat. A poll conducted on 1500 Canadians in 2015 found that almost 2 out of 5 believed that the science of vaccinations was not clear, more than one in three believed vaccines should not be mandatory, and more than one in ten believed that vaccines did not prevent diseases in individuals. This is by no means limited to issues of science either. In an alarming survey of 2 500 Britons out of the University of Oxford, nearly 20 per cent agreed to some extent with the following statement regarding COVID-19: “Jews created the virus to collapse the economy for financial gain.”

This reveals perhaps the greatest paradox in human history. At a time when we have unparalleled access to information, at the peak of human knowledge and advancement, ignorance is not being eradicated, but instead increasingly propagated at every level of society, including within the halls of government. Worse still, on countless political issues many remain nearly irreconcilably polarized. This phenomenon stands at odds with the very heart of Western liberal political theory post-enlightenment. Knowledge in the Western canon has long predominantly been conceived to be composed of objective, unfalsifiable, ever-lasting truths regarding the nature of the world. As such, the free dissemination of knowledge through debate, an uncensored press, and free speech was thought to be of critical importance to the spread of truth. This was reasonable considering the context of the time, when most of the world lacked these freedoms and could be incarcerated or worse for possession of a treasonous, heretical, or otherwise blasphemous opinion.

How, then, can we explain the current phenomenon of increasing belief in misinformation alongside increased access to information? Some propose that increased access to information, in contrast to established thought, does not decrease belief in misinformation ad infinitum, but may actually increase it if information is overly available. Fundamental to this perspective is a conceptualization of the nature of beliefs in which they are thought of as tools. Together our beliefs are thought to serve the purpose of constructing a stable perception of reality, allowing individuals to function within it and create a life for themselves with goals, values, and a sense of understanding. Stability, however, is not necessarily accuracy.

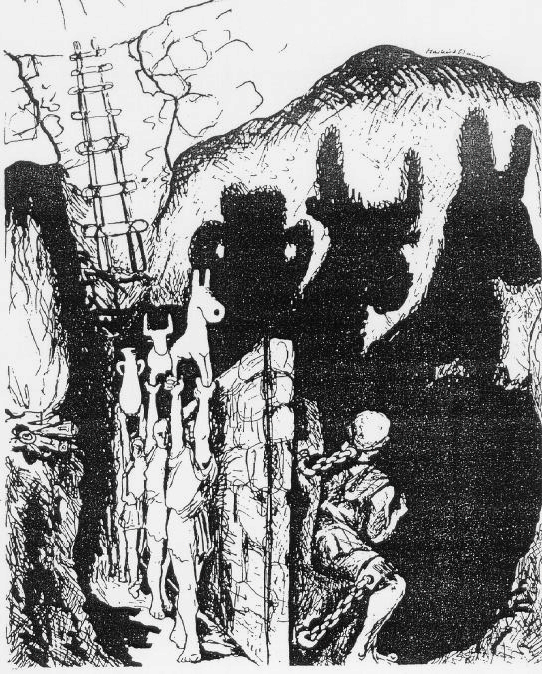

Consider Plato’s allegory of the cave. Within this cave, men are chained to a wall their entire lives, and in front of them they see only the shadows of a fire hidden from their view. They would believe, Plato reasons, that the world consists of nothing other than shadows. This analogy is useful when considering the concept of consciousness. As individuals, we have only a limited amount of knowledge of the outside world. Like the men in the cave looking at the shadows, our reality is interpreted based on our naturally limited perceptions. This is an evolutionary necessity, as our perception is inadequate to explain things exhaustively. As such, we have evolved to fill in the gaps in our perception of reality with confidence. It is this process that would allow the men in the Plato’s cave allegory to perceive an inaccurate but stable view of their own reality.

The most drastic example of this comes from split-brain patients. The human brain is divided into two halves: a right side and a left side. Operating contralaterally, the right side of the brain controls the left side of the body and receives visual input from the left field of vision, and the opposite is true for the left side of the brain. For most individuals, these two halves communicate with each other through a structure in the middle, the corpus callosum. This can be severed, however, and is occasionally done even today as a last resort in treating epilepsy. In these patients, both halves of their brain were still functional, but simply can not communicate with each other.

In the most famous series of experiments, a visual divider was placed in front of the individual such that the ‘right brain’ and ‘left brain’ could not see what was in each other’s field of vision. A word describing an object could be shown only to the ‘right brain.’ When asked whether or not they saw a word, the individual would say they had not, as the center for human consciousness and language is thought to be in the ‘left brain.’ However, their left hand, which was controlled by the side of the brain that saw the word, would reach for the named object if it was in a pile visible to the ‘right brain.’ When asked why they had reached for it, the participant, not knowing the word was shown to their ‘right brain,’ would confidently come up with a plausible but completely unrelated explanation, such as “I thought it just looked cool,” or “I always wanted one of these things as a kid.” Thus a stable, yet inaccurate sense of reality was maintained, and the pesky gaps that unattended would wreak untold mental havoc were filled with nary a second thought.

This reflects a fundamental principle about human psychology: that our behaviour is often the result of unconscious processes, later consciously rationalized, for the purpose of maintaining a consistent perception of reality. As a result, a pervasive trend has been found through psychological studies that indicate that people overwhelmingly seek out information that confirms their pre-existing beliefs, that when examining information they agree with they are far less critical of its legitimacy than if they do not agree with it, and that they tend to remember information better if they agree with it. With the freedom social media affords us to choose what information we are exposed to, this can lead to an “echo-chamber” effect, where only sources that affirm the views of the individual are chosen.

When people have near infinite access to information, it essentially becomes easy to confirm their own pre-existing beliefs, their identity, and maintain illusions they have built for themselves. People are in essence, free to construct their own caves, where the coronavirus may be caused by 5G towers, Donald Trump may never tell a lie, and perhaps the Earth actually is flat. While accessibility to information is of incredible importance, and a necessity in a political body that allows for existing ideas to be challenged, there may be consequences to this freedom unforeseen by previous generations.

Edited by Jane Warren

The opinions expressed in this article are solely those of the author and they do not reflect the position of the McGill Journal of Political Studies or the Political Science Students’ Association.

Featured image by Markus Maurer and obtained via Wikimedia Commons under a CC BY-SA 3.0 license.